Describing Enterprise Artificial Intelligence: Platform as a Service with Current AI Infrastructure

Artificial Intelligence (AI), a much talked about concept, is the future of the Information Technology world which combines human capacities for learning, perception and interaction at all levels of complexity. Similarly, Enterprise AI is augmentation of the work of people in an organization for better results. Considering the kind of information needed for the AI applications to work, it is obvious that it cannot be done without the use of database. Every modern application relies on database, may it be a mobile, web or desktop app. Some apps use flat files while others rely on memory or NoSQL database.

Even traditional enterprise applications interacted with large database clusters running Microsoft SQL, Oracle etc. and the fact is, that every application needs it.

Like databases, Artificial Intelligence (AI) is moving towards becoming a core component of modern applications. Sooner or later, almost every application that we use will depend on some form of AI.

Start Your 14-Day Free Trial

- Pre-built connectors and ready-made integration templates

- Real-time and bi-directional data sync

- Self-healing automation - zero babysitting

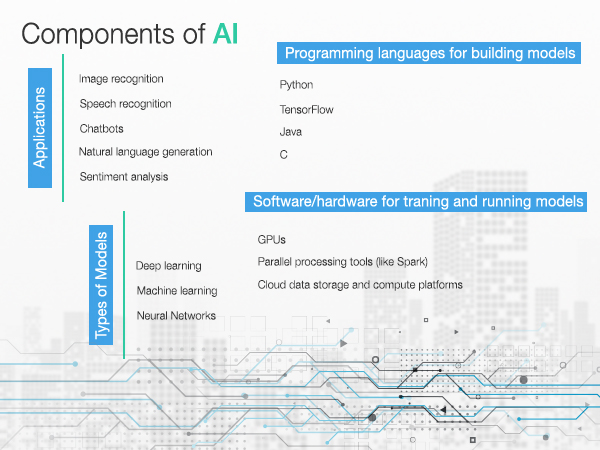

Enterprise AI simulates the cognitive functions of the human mind — learning, reasoning, perception, planning, problem solving and self-correction — with computer systems. Enterprise AI is a part of a range of business applications, such as expert systems, speech recognition and image recognition.

1. Start Consuming Artificial Intelligence APIs

This approach is the least disruptive way of getting started with AI. Many existing applications can turn intelligent through integration with language understanding, image pattern recognition, text to speech, speech to text, natural language processing, and video search API.

Let’s look at a concrete example of analyzing the customer sentiment. Ideally, all customer calls are directed to the customer service team and are recorded for random sampling. A supervisor routinely listens to these calls in order to assess the quality and overall customer satisfaction. However, this analysis is done only on a small subset of all the calls received by the customer services team. This use case is an excellent candidate for AI APIs. Each recorded call can be first converted into text, which is then sent to a sentiment analysis API, which will ultimately return a score that directly represents the customer satisfaction level.

The best thing is that the process only takes a few seconds for analyzing each call, which means that the supervisor now has visibility into the quality score of all the calls almost real-time. This approach enables the company to quickly escalate incidents to tackle unhappy customers and rude customer service agents. From CRM, finance to manufacturing domains, organizations will tremendously benefit from the integration of AI.

Some of the top AI platforms and API providers are:

- Google Cloud ML Services (https://cloud.google.com/ml-engine/reference/)

- IBM Watson Services (https://console.bluemix.net/catalog/?category=ai)

- Microsoft Cognitive Services (https://docs.microsoft.com/en-us/azure/cognitive-services/)

- Clarifai (https://clarifai.com/developer/guide/)

Sync data from QuickBooks Online to MySQL

Sync data from QuickBooks Online to MySQL

Sync data from QuickBooks Online to IBM DB2

Sync data from QuickBooks Online to IBM DB2

Sync data from QuickBooks Online to Chabadone

Sync data from QuickBooks Online to Chabadone

2. Build and Deploy custom AI models in the Cloud

While consuming APIs is a great start for AI, it is often limiting for enterprises.

We have seen the benefits of Integrating Artificial Intelligence with applications Through which customer experience can be taken to the next level.

This step includes acquiring data from a variety of existing sources and implementing a custom machine learning model. It requires creating data processing pipelines, identifying the right algorithms, training and testing machine learning models and finally deploying them in production.

Similar to Platform as a Service (PaaS) that takes the code and scales it in the production environment, machine learning as a service (MLaaS) offering takes the data and exposes the final model as an API endpoint. The benefit of this deployment pattern lies in making use of the cloud infrastructure for training and testing the models. Customers will be able to spin up infrastructure powered by advanced hardware configuration based on GPUs and FPGAs.

Platforms that offer Machine Learning as a Service:

- Amazon ML (https://docs.aws.amazon.com/machine-learning/index.html#lang/en_us)

- Azure ML Studio (https://docs.microsoft.com/en-us/azure/machine-learning/)

- Google Cloud ML Engine (https://cloud.google.com/ml-engine/docs/)

- Bonsai AppNexus (https://wiki.appnexus.com/display/api/Welcome)

- BigML (https://bigml.com/api)

3. Run Open Source AI Platforms On-Premise

The final step in AI-enabling applications is to invest in the infrastructure and teams required to generate and run the models locally. This is for enterprise applications with a high degree of customization and for those customers who need to comply with policies related to data confidentiality.

If MLaaS is similar to PaaS and running AI infrastructure locally, then it is comparable to a Private Cloud. Customers need to invest in modern hardware based on SSDs and GPUs designed for parallel processing of data. They also need expert data scientists who can build highly customized models based on open source frameworks. The biggest advantage of this approach is that everything runs in-house. From data acquisition to real-time analytics, the entire pipeline stays close to the applications. But on the flipside, OPEX and the need for experienced data scientists.

Customers implementing the AI infrastructure use one of the below open source platforms for Machine Learning and Deep Learning:

- MXNet (https://mxnet.incubator.apache.org/api/python/index.html)

- Microsoft Cognitive Toolkit (https://docs.microsoft.com/en-us/cognitive-toolkit/)

- Tensorflow (https://www.tensorflow.org/api_docs/)

- Theano (http://deeplearning.net/software/theano/library/index.html)

If you want to get started with AI, explore the APIs first before moving to the next step. For developers, the hosted MLaaS offerings may be a good start. Artificial Intelligence is evolving to become a core building block of contemporary applications. AI is all set to become as common as databases. It’s time for organizations to create the roadmap for building intelligent applications.

AI Data evolutions like Data Processing and Neural Networks.

Now in present times, we feed loads of data to the computer, so the computer understands about deep learning technologies and the reason behind this to take AI Initiative.

Neural networks processes information in a similar way a human brain does. The network is composed of a large number of highly interconnected processing elements (neurons) working in parallel to solve a specific problem. Neural networks learn by example to solve complex signal processing and pattern recognition problems, including speech-to-text transcription, handwriting recognition and facial recognition.

Data processing is, generally, “the collection and manipulation of items of data to produce meaningful information.” In this sense it can be considered a subset of information processing, “the change (processing) of information in any manner detectable by an observer.”

AI data processing is the need for high-quality data. While data quality has always been important, it’s arguably more vital than ever with AI initiatives.

In 2017, research firm Gartner described a few of the myths related to AI. One is that AI is a single entity that companies can buy. In reality, it’s a collection of technologies used in applications, systems and solutions to add specific functional capabilities, and it requires a robust AI infrastructure.

Another myth is that every organization needs an AI strategy or a chief AI officer. The fact is, although enterprise AI technologies will become pervasive and increase in capabilities in the near future, companies should focus on business results that these technologies can enhance.

Conclusion

To conclude, I would like to mention, everyone would eventually use Artificial Intelligence Applications as we found that many existing applications can turn intelligent through integration with language understanding, image pattern recognition, text to speech, speech to text, NLP and video search API.

With the help of AI APIs, we can easily understand customer problems and give a better product to them for their use. Cloud-based custom AI models can be built and deployed, which are beneficial and prove useful, taking data processing to the next level.

AI APIs will acquire data from a variety of sources and help to create data processing pipelines, identify the right algorithm and deploy it in the production environment.

Hence, it is recommended to use the AI API applications, to reduce complexity and make the processes more effective.

FAQs

What is Enterprise AI?

Enterprise AI simulates human cognitive functions like learning and reasoning, enhancing business applications such as speech recognition, image recognition, and expert systems.

How can businesses start using AI with minimal disruption?

Businesses can begin by integrating AI APIs for functions like sentiment analysis, speech-to-text, or image recognition, enabling existing applications to become intelligent.

What is Machine Learning as a Service (MLaaS)?

MLaaS allows businesses to build and deploy custom AI models in the cloud, leveraging data processing pipelines and advanced hardware for training and testing.

Why might a company choose to run AI platforms on-premise?

On-premise AI platforms offer high customization and data confidentiality, ideal for enterprises with strict compliance needs, though they require significant investment in hardware and expertise.

How do neural networks contribute to Enterprise AI?

Neural networks mimic human brain processing, enabling AI to solve complex tasks like speech transcription, handwriting recognition, and facial recognition through interconnected data processing.